- Home

- Services

- About

- News

- Contact

- Twiztid the darkness album

- Macrium reflect free edition vs paid

- Mac internal hard drive caddy or enclosure

- Call of duty cd key xbox one

- Memory card 2010 macbook pro 13 inch

- Free word download for windows 10

- Data analysis programs hive doot

- Intel r wifi link 5100 agn properties

- How to reduce size of pdf further

- Star wars free to play chat

- Uk harry potter audiobook download

- El capitan download dmg fie

- Lenovo support drivers ideapad 500s

- Chessmaster for mac download

- Overwatch free download mega

- Helvetica neue light font

- Where to install expansions for avenger vst

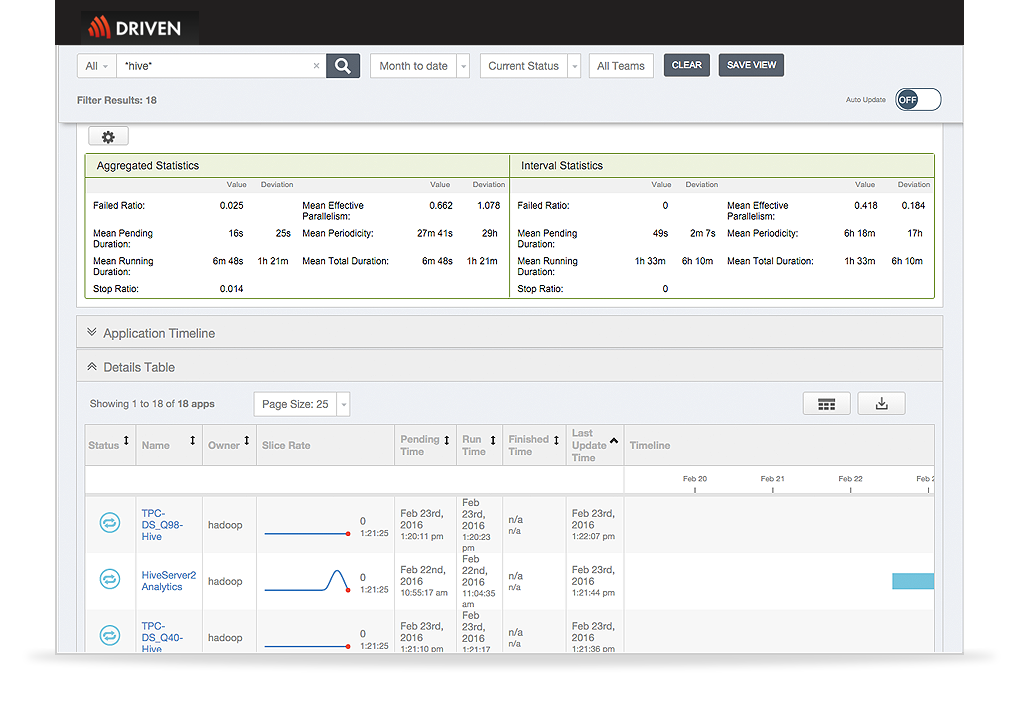

Let's now take a look at Hive data modeling, which consists of tables, partitions, and buckets: Finally, we have a bidirectional communication to fetch and send results back to the client.In addition to this, the execution engine also communicates bidirectionally with the metastore to perform various operations, such as create and drop tables.The execution engine acts as a bridge between the Hive and Hadoop to process the query.

Data analysis programs hive doot driver#

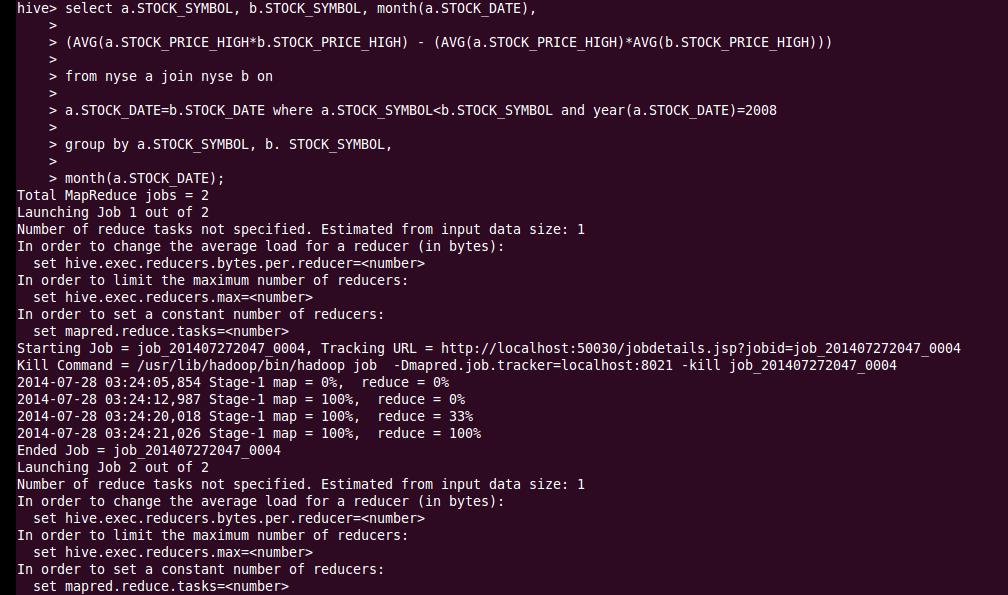

Now, the driver sends the execution plan to the execution engine.The compiler gathers this information and sends the plan back to the driver.The metastore responds with the metadata.After this, the compiler gets the metadata from the metastore.Then the driver asks for the plan, which refers to the query execution.We execute a query, which goes into the driver.All of that goes into the MapReduce and the Hadoop file system. Underneath the user interface, we have driver, compiler, execution engine, and metastore. Data Flow in Hiveĭata flow in the Hive contains the Hive and Hadoop system. In this Hive tutorial, let's understand how does the data flow in the Hive. If you have read our other Hadoop blogs, you'll know that these are on commodity machines and are linearly scalable, which means they're very affordable. Finally, we have distributed storage, which is HDFS.

Hive uses the MapReduce framework to process queries. It stores metadata for Hive tables, and you can think of this as your schema. Metastore is a repository for Hive metadata. Executor - In the final step, the tasks are executed.Optimizer - Optimized logical plan in the form of a graph of MapReduce and HDFS tasks is obtained.Compiler - The Hive driver passes the query to the compiler, where it is checked and analyzed.Up next is the Hive driver, which is responsible for all the queries submitted. In addition to the above, we also have the Hive web interface, or GUI, where programmers execute Hive queries. All these client requests are submitted to the Hive server. Then we have an ODBC (Open Database Connectivity) application connected through the ODBC Driver. The JDBC application is connected through the JDBC Driver. Next, we have the JDBC (Java Database Connectivity) application and Hive JDBC Driver. The Hive Server is based on Thrift, so it can serve requests from all of the programming languages that support Thrift. The Hive client supports different types of client applications in different languages to perform queries. We start with the Hive client, who could be the programmer who is proficient in SQL, to look up the data that is needed. The architecture of the Hive is as shown below. In the next section of the Hive tutorial, let's now take a look at the architecture of the Hive. These queries are converted into MapReduce tasks, and that accesses the Hadoop MapReduce system. Hive uses a query language called HiveQL, which is similar to SQL.Īs seen from the image below, the user first sends out the Hive queries. Hive is a data warehouse system that is used to query and analyze large datasets stored in the HDFS. The idea was to incorporate the concepts of tables and columns, just like SQL. Users were comfortable with writing queries in SQL (Structured Query Language), and they wanted a language similar to SQL. Not all users were well-versed with Java and other coding languages.

With MapReduce, users were required to write long and extensive Java code. If you have read our previous blogs, you would know that big data is nothing but massive amounts of data that cannot be stored, processed, and analyzed by traditional systems.Īs we know, Hadoop uses MapReduce to process data. Facebook adopted the Hadoop framework to manage their big data. Hive has a fascinating history related to the world's largest social networking site: Facebook. Looking forward to becoming a Hadoop Developer? Check out the Big Data Hadoop Certification Training Course and get certified today. In this Hive tutorial, let's start by understanding why Hive came into existence. In other words, in the world of big data, Hive is huge. It supports easy data summarization, ad-hoc queries, and analysis of vast volumes of data stored in various databases and file systems that integrate with Hadoop. If you have had a look at the Hadoop Ecosystem, you may have noticed the yellow elephant trunk logo that says HIVE, but do you know what Hive is all about and what it does? At a high level, some of Hive's main features include querying and analyzing large datasets stored in HDFS.

- Home

- Services

- About

- News

- Contact

- Twiztid the darkness album

- Macrium reflect free edition vs paid

- Mac internal hard drive caddy or enclosure

- Call of duty cd key xbox one

- Memory card 2010 macbook pro 13 inch

- Free word download for windows 10

- Data analysis programs hive doot

- Intel r wifi link 5100 agn properties

- How to reduce size of pdf further

- Star wars free to play chat

- Uk harry potter audiobook download

- El capitan download dmg fie

- Lenovo support drivers ideapad 500s

- Chessmaster for mac download

- Overwatch free download mega

- Helvetica neue light font

- Where to install expansions for avenger vst